Per il meetup di Novembre riproponiamo la formula dei primi meetup di DataScienceSeed, con 2 interventi: il primo tratterà la storia dell’OCR, il secondo l’Anomaly Detection applicata a problemi di ottimizzazione. I 2 talks saranno seguiti dal consueto spazio per domande e risposte e dall’immancabile rinfresco a base di focaccia.

L’appuntamento è per Giovedi 30 Novembre a partire dalle ore 18:00 presso l’Ordine degli Ingegneri della Provincia di Genova, Piazza della Vittoria, 11/10.

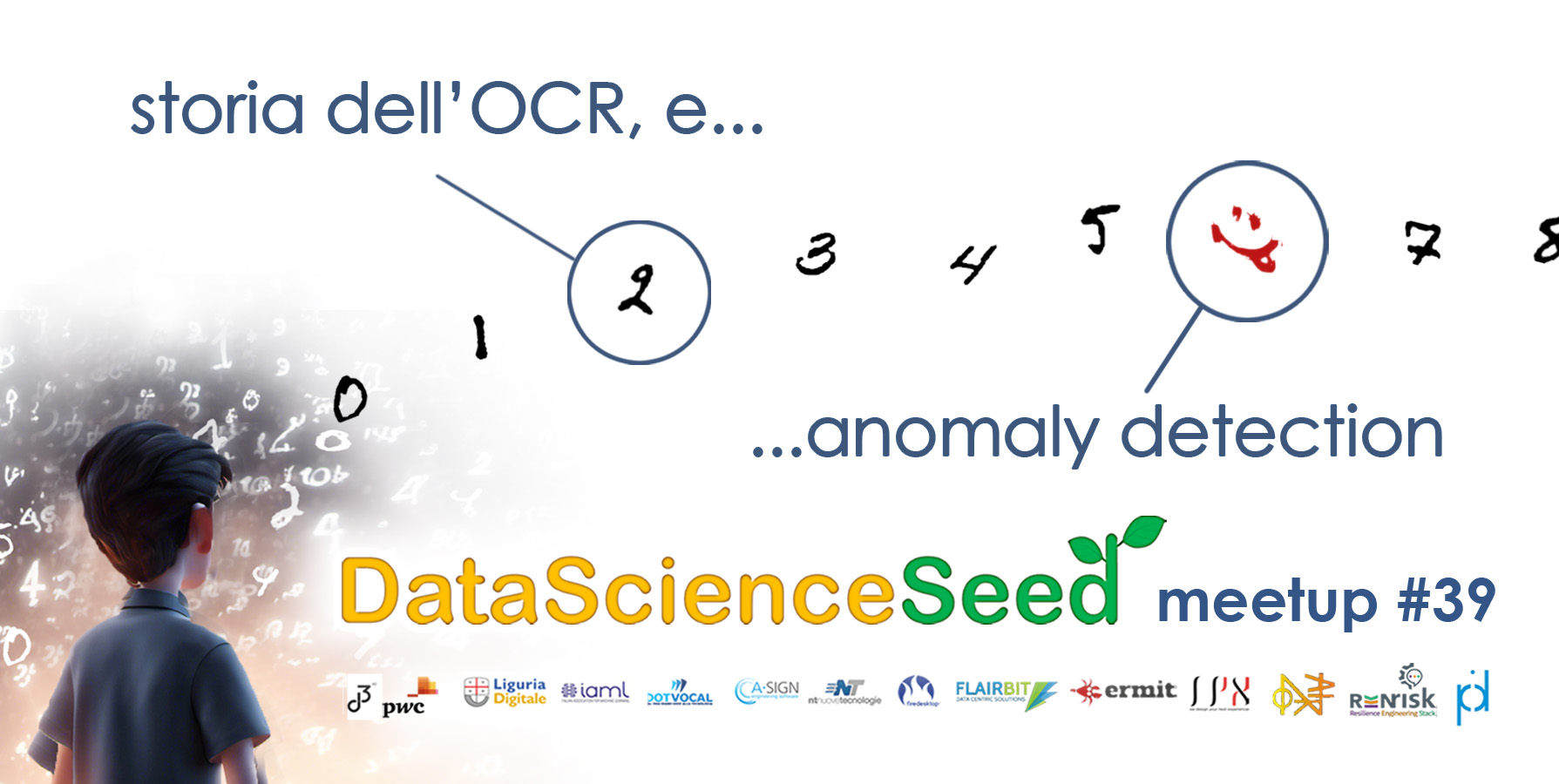

MNIST: nascita, vita e morte di un dataset pubblico.

Nel primo talk racconteremo la storia del processo OCR (Optical Character Recognition), passato negli anni da obiettivo di ricerca a semplice commodity, attraverso il ruolo cruciale svolto dal dataset MNIST, che ha avuto un ruolo fondamentale nello sviluppo del deep learning. È stato uno dei primi dataset di immagini utilizzati per l’addestramento di reti neurali convoluzionali (CNN), un tipo di rete neurale particolarmente efficace per la classificazione di immagini.

Il racconto parte dai primi approcci di machine learning, prosegue con i risultati di Yann LeCun, che proprio su questo dataset ha dato una prima dimostrazione del potenziale delle CNN per la classificazione di immagini e quindi contribuito a una nuova ondata di interesse per il deep learning,e finisce con le moderne architetture a transformers.

Si parlerà anche del ruolo della ricerca italiana in questo percorso, con alcuni importanti successi ed alcune occasioni mancate.

Ennio Ottaviani è un fisico teorico, ricercatore industriale ed imprenditore. E’ direttore scientifico di OnAIR, dove coordina progetti di ricerca sulle applicazioni di IA in diversi settori dell’industria e dei servizi. E’ docente di Metodi Predittivi per il corso di laurea in Statistica Matematica della Università di Genova. Ennio è già stato ospite in passato di DataScienceSeed con un interessantissimo talk su Quantum Computing e Data Science.

Ennio Ottaviani è un fisico teorico, ricercatore industriale ed imprenditore. E’ direttore scientifico di OnAIR, dove coordina progetti di ricerca sulle applicazioni di IA in diversi settori dell’industria e dei servizi. E’ docente di Metodi Predittivi per il corso di laurea in Statistica Matematica della Università di Genova. Ennio è già stato ospite in passato di DataScienceSeed con un interessantissimo talk su Quantum Computing e Data Science.

Scoprire le anomalie nei big data, con l’applicazione del Machine Learning e delle Metaeuristiche

Il secondo talk della giornata tratterà tematiche riguardanti l’Anomaly Detection, l’integrazione delle Metaeuristiche nel campo Machine Learning e algoritmi di ottimizzazione.

L’Anomaly Detection è un campo dell’intelligenza artificiale che si occupa di identificare dati anomali in un set di dati. Le anomalie possono essere dovute a vari fattori, come errori di misurazione, eventi imprevisti o attacchi malevoli. L’intervento intende fare una panoramica sulle anomalie in campi come i sistemi di controllo industriali, i sistemi di intrusione, l’analisi di eventi climatici, di traffico urbano, di fake news e tanto altro.

Le Metaeuristiche sono un tipo di algoritmo di ottimizzazione che utilizzano una strategia di esplorazione/sfruttamento per trovare una soluzione ottimale o subottimale a un problema. Sono spesso utilizzate in problemi complessi, dove gli algoritmi tradizionali possono fallire. L’integrazione delle Metaeuristiche nell’Anomaly Detection può migliorare l’accuratezza e la robustezza dei sistemi di rilevamento delle anomalie.

Claudia Cavallaro si occupa di ricerca in Informatica, nel tema dei Big Data, dell’Ottimizzazione e delle Metaeuristiche. E’ una docente dell’Università di Catania, per i corsi di laurea triennale e magistrale di Informatica in “Strutture Discrete” ed “Euristics and metaheuristics for optimizazion and learning”. Recentemente ha partecipato come speaker alle conferenze ITADATA 2023(The 2nd Italian Conference on Big Data and Data Science) e WIVACE 2023 (XVII International Workshop on Artificial Life and Evolutionary Computation). Ha iniziato a lavorare nel campo di ricerca dell’Anomaly Detection già durante il periodo di post-doc presso il CNAF-I.N.F.N. di Bologna.

Claudia Cavallaro si occupa di ricerca in Informatica, nel tema dei Big Data, dell’Ottimizzazione e delle Metaeuristiche. E’ una docente dell’Università di Catania, per i corsi di laurea triennale e magistrale di Informatica in “Strutture Discrete” ed “Euristics and metaheuristics for optimizazion and learning”. Recentemente ha partecipato come speaker alle conferenze ITADATA 2023(The 2nd Italian Conference on Big Data and Data Science) e WIVACE 2023 (XVII International Workshop on Artificial Life and Evolutionary Computation). Ha iniziato a lavorare nel campo di ricerca dell’Anomaly Detection già durante il periodo di post-doc presso il CNAF-I.N.F.N. di Bologna.

Ennio Ottaviani

Ennio Ottaviani  Claudia Cavallaro

Claudia Cavallaro